Improving peer assessment for students, peers, and instructors

Large-scale peer assessment in online classrooms creates unique educational opportunities. For the first time, students can see work from classmates on different continents, with different perspectives and strengths. The PeerAPI project is an experiment to tap into these opportunities. It offers programmatic methods for improving peer assessment, and requires minimal instructor involvement.

Use PeerAPI in your own class

(Send us email to get started)

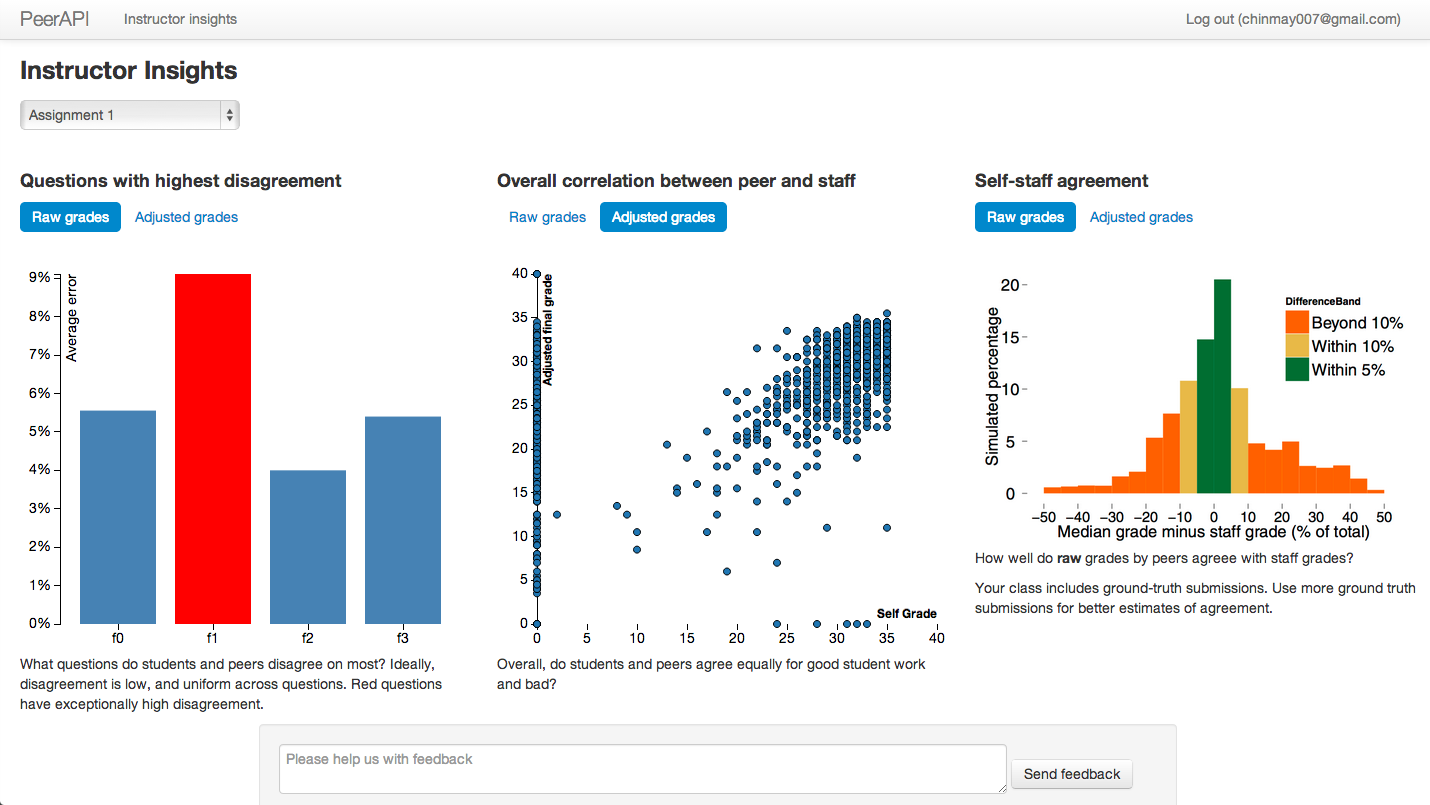

Smarter grading

Better algorithms

Peer assessment relies on consensus amongst independent raters. Modeling the grading patterns of these raters can help the system understand consensus judgment better. Our experimental hypothesis is that this should result in more accurate grades for students, and potentially a lower grading burden on students.

Feedback about grading bias

When told of their grading bias, students change their grading behavior to reduce it. This results in more accurate grading and may also help students better understand and distinguish good work. Students are provided feedback automatically.

Highlighting learning benefits

Students already intuitively understand the benefits of peer assessment. Our system tries to highlight learning benefits to improve learning.

Did you see that?

Often in an online classroom, students may see work they never could in a traditional classroom. Highlighting such opportunities for learning may help students pay attention to relevant parts, and make assessment more enjoyable.

“What did I do wrong?”

Answering this question requires students to reflect on their own work, and that of others. We help bootstrap this reflection by pointing out good student work in areas in which the student performed poorly.